The Benefits of AI-Powered Live Captions for Event Accessibility

In an increasingly interconnected world, the accessibility of events, whether physical or virtual, is crucial. Ensuring that all participants, regardless of their abilities, can fully engage with content is essential for inclusivity. One technological advancement driving this change is AI-powered live captions. By utilizing artificial intelligence (AI) and machine learning (ML), AI-powered live captions provide real-time transcriptions of spoken words, allowing individuals with hearing impairments, language barriers, or noisy environments to access the content more effectively.

This article explores the role of AI-powered live captioning in enhancing event accessibility, delving into their benefits, challenges, and the underlying technologies that make them possible. It also includes structured lists of key advantages and challenges and presents comparative data in table format to offer a comprehensive understanding of their importance for event inclusivity.

What Are AI-Powered Live Captions?

AI-powered live captions use Automatic Speech Recognition (ASR) systems powered by artificial intelligence to convert spoken language into text in real time. These captions appear either at the bottom of the video or in a separate text box, allowing viewers to follow the dialogue or presentation, even in scenarios where they cannot hear clearly or are unfamiliar with the language.

Key Components of AI-Powered Live Captions:

- Automatic Speech Recognition (ASR): This technology detects speech patterns and converts spoken language into text by recognizing phonetic units and matching them to words.

- Natural Language Processing (NLP): Once ASR transcribes speech, NLP refines the text to ensure grammatical accuracy and contextual relevance.

- Machine Learning (ML): AI-based systems use ML algorithms that continuously learn and adapt to improve the accuracy of live captions, based on the data they process.

The Importance of Event Accessibility

Ensuring event accessibility is not just a matter of inclusivity but is often a legal requirement in many countries. Accessibility features, such as live captions, are key for making sure events can be attended by individuals with varying needs, including those with hearing disabilities or those who speak different languages. As virtual and hybrid events become more common, accessibility solutions, like AI-powered live captions, enable broader audience engagement.

Why Event Accessibility Matters:

- Inclusivity: Ensuring that everyone, regardless of their physical or cognitive abilities, can participate in events.

- Legal Compliance: Many regions mandate accessibility features for public events under laws like the Americans with Disabilities Act (ADA) or the European Accessibility Act (EAA).

- Increased Engagement: Accessible events cater to wider audiences, including those who prefer or need to read along, such as non-native speakers or those in noisy environments.

- Global Reach: Events that include captions can bridge language gaps, making them accessible to a more diverse, international audience.

The Role of AI-Powered Live Captions in Event Accessibility

Real-Time Accessibility for Hearing Impaired

For individuals with hearing impairments, real-time captions are essential for participating in events. AI-powered live captions ensure that these individuals can read the speech as it happens, allowing them to fully engage with the content. Unlike traditional manual captioning, AI-powered solutions can provide immediate transcriptions without the need for pre-prepared scripts or human captioners.

Enhancing Comprehension in Noisy Environments

In settings where audio clarity is compromised due to external noise or suboptimal acoustics, live captions can provide an alternative mode of information consumption. Attendees can rely on captions to follow the discussion when auditory channels are obstructed.

Bridging Language Barriers

AI-powered live captions are not limited to a single language. Advanced systems can simultaneously translate speech into multiple languages, allowing event organizers to cater to international audiences. This multilingual support enhances the inclusivity of global events, enabling participation from individuals who may not be fluent in the spoken language.

Improved Audience Retention and Engagement

Studies have shown that captions improve comprehension and retention, even for native speakers. By reading and listening to content simultaneously, participants are more likely to understand and remember key points. AI-powered live captions enhance the audience’s cognitive engagement, leading to a more fulfilling event experience.

Key Benefits of AI-Powered Live Captions for Event Accessibility

- Real-Time Transcriptions: Provide immediate accessibility for individuals with hearing impairments, enabling them to follow live events as they occur.

- Multilingual Support: AI-powered systems can generate captions in multiple languages, catering to global audiences.

- Scalability: These systems can be deployed for large-scale events without the need for extensive human resources.

- Consistency and Accuracy: Machine learning ensures that captions remain consistent across different events and improve in accuracy over time.

- Enhanced Comprehension: Viewers can follow along with complex or technical content more easily by reading captions.

- Cost-Effective: Reduces the costs associated with hiring human transcriptionists for real-time captioning services.

- Improved Engagement: Boosts audience engagement by providing an alternative medium to process information, particularly in environments where audio may be unclear.

Technical Breakdown: How AI-Powered Live Captions Work

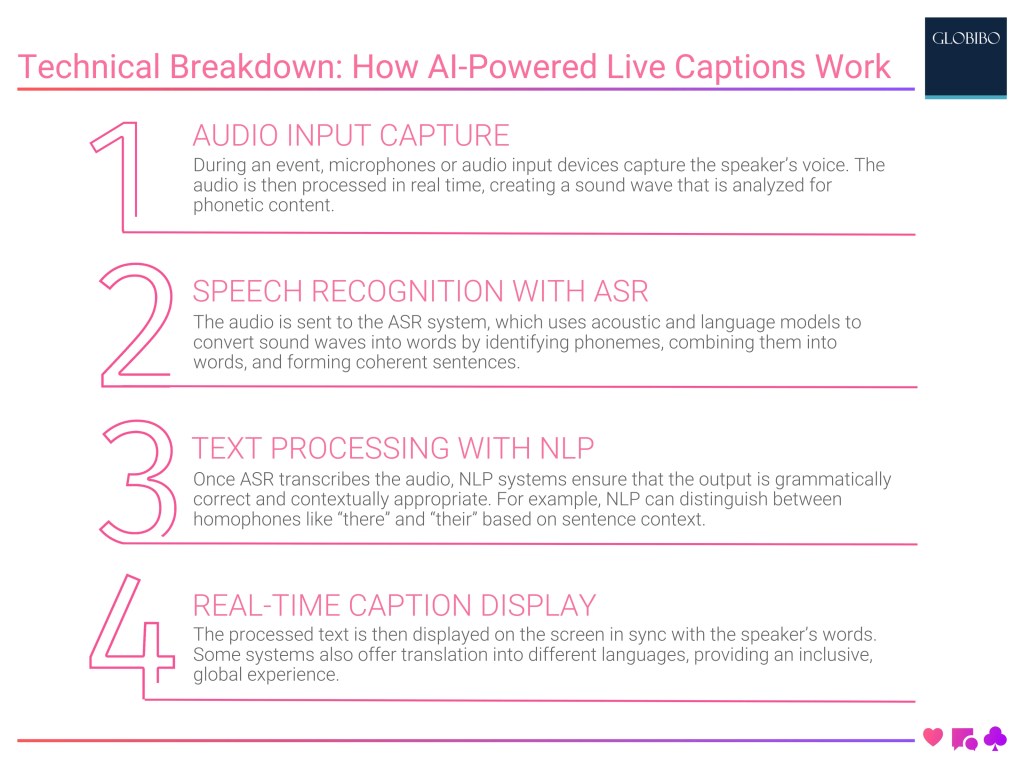

Audio Input Capture

During an event, microphones or audio input devices capture the speaker’s voice. The audio is then processed in real time, creating a sound wave that is analyzed for phonetic content.

Speech Recognition via ASR

The captured audio is transmitted to the ASR system, which uses acoustic and language models to match the sound wave to words. This process includes identifying individual phonemes, combining them into words, and constructing coherent sentences.

Text Processing with NLP

Once ASR transcribes the audio, NLP systems ensure that the output is grammatically correct and contextually appropriate. For example, NLP can distinguish between homophones like “there” and “their” based on sentence context.

Real-Time Captioning Display

The processed text is then displayed on the screen in sync with the speaker’s words. Some systems also offer translation into different languages, providing an inclusive, global experience.

AI-Powered Live Captioning Process

| Step | Description |

| Audio Input Capture | Captures audio signals from the event environment. |

| Speech Recognition (ASR) | Converts audio signals into phonetic representations and transcribes them into text. |

| Natural Language Processing | Refines the transcribed text, ensuring grammatical and contextual accuracy. |

| Real-Time Caption Display | Displays the captions in sync with the speaker’s voice, offering translation support if needed. |

Challenges of AI-Powered Live Captions

Despite their many benefits, AI-powered live captions face certain challenges:

- Accurate Recognition in Noisy Environments: While AI systems have improved, background noise can still cause transcription errors.

- Limited Contextual Understanding: AI can struggle with context-specific language, such as idiomatic expressions, resulting in less accurate captions.

- Latency Issues: Though live, there is a slight delay between speech and the appearance of captions, which may disrupt audience engagement.

- Difficulty with Accents and Dialects: AI systems may have difficulty accurately transcribing speech with strong regional accents or unique dialects.

- Handling Specialized Terminology: AI systems can struggle with industry-specific jargon, scientific terms, or uncommon vocabulary that is not in their training data.

Real-World Applications of AI-Powered Live Captions

Corporate Events and Conferences

In corporate settings, AI-powered live captions are often used for webinars, meetings, and large-scale conferences. These events are increasingly held online or in hybrid formats, necessitating captions for accessibility and inclusivity. AI-powered systems offer a cost-effective, scalable solution for providing real-time captions to large audiences, especially in global events where multiple languages are spoken.

Educational Institutions

Educational webinars, lectures, and courses can leverage AI-powered live captions to improve accessibility for students with hearing impairments or those who speak English as a second language. Captions also enhance comprehension for all students, making complex topics more digestible.

Broadcasting and Live Streaming

Live broadcasting platforms, such as YouTube and Facebook, have integrated AI-powered live captions into their systems, allowing creators to reach a broader, more diverse audience. For broadcasters, AI-powered captions reduce the need for human transcription services, improving accessibility while minimizing costs.

Comparison: AI-Powered Live Captions vs. Human Captioning

Although human captioning services still play an important role in many events, AI-powered live captions are rapidly becoming the preferred choice due to their speed, scalability, and cost efficiency. Below is a comparison of the two approaches across key criteria.

AI-Powered Live Captions vs. Human Captioning

| Criteria | AI-Powered Live Captions | Human Captioning |

| Speed | Real-time transcription with minimal latency | Delayed due to human processing |

| Accuracy | High, though may struggle with accents or specialized terms | Very high, especially with specialized or technical content |

| Cost | Lower costs, as AI reduces the need for human labor | Higher costs due to labor requirements |

| Scalability | Scalable for large or multilingual events | Limited scalability due to the need for human resources |

| Contextual Understanding | Limited contextual understanding; may misinterpret nuances | Strong understanding of context and idiomatic language |

Future Developments in AI-Powered Live Captions

The future of AI-powered live captions looks promising, with advancements likely to address current limitations. Here are some areas of development:

- Improved Accuracy for Accents and Dialects: Enhanced training models that can better handle diverse accents and dialects.

- Contextual AI: Developing AI systems capable of understanding contextual nuances, such as idioms, jokes, and cultural references.

- Integration with Other Accessibility Tools: Future solutions may integrate with augmented reality (AR) or virtual reality (VR) technologies to display captions in immersive environments.

Conclusion for AI-powered live captions

AI-powered live captions represent a significant advancement in making events more accessible to a global audience. By providing real-time, scalable, and cost-effective captioning solutions, AI helps to break down communication barriers for individuals with hearing impairments, non-native speakers, and participants in noisy environments. Despite some challenges related to accuracy, latency, and contextual understanding, AI-powered live captions are constantly evolving, offering increasingly robust solutions for event accessibility.

As AI technologies continue to advance, the future of live captions looks bright, paving the way for even more inclusive and engaging events worldwide.

Academic References for AI-powered live captions

-

GENERATIVE AI–POWERED FRAMEWORK

-

Investigating Use Cases of AI–Powered Scene Description Applications for Blind and Low Vision People

-

[HTML] An AI–powered approach to the semiotic reconstruction of narratives

-

Generative AI in User-Generated Content

-

Unlocking Creator-AI Synergy: Challenges, Requirements, and Design Opportunities in AI–Powered Short-Form Video Production

-

[PDF] INNOVATION IN THE TOURISM INDUSTRY: HOW ARTIFICIAL INTELLIGENCE IS RESHAP-ING MANAGEMENT OF MEETINGS & EVENTS

-

[BOOK] AI–Powered Productivity

-

Artificial intelligence powered digital asset management: Current state and future potential

-

Emerging technologies in the event industry

-

[HTML] Human-centered artificial intelligence for designing accessible cultural heritage

Rick Lee

Project Manager – Event Technology

With over 10 years of experience in event technology, Rick is an expert in integrating cutting-edge tech solutions for seamless event execution. His expertise includes audio-visual setups, interactive displays, and live-streaming technologies. Rick’s innovative approach ensures every event is technologically advanced and highly engaging.

Youtube Video on AI-Powered Live Captions

Key Articles on AI-Powered Live Captions

Related

Contacts

- Australia+61 28317 3495 email

- China+ 86 10 87833258 email

- France+33 6 1302 2599 email

- Germany+49 (030) 8093 5151 email

- Hong Kong+852 5801 9962 email

- India+91 (11) 7127 9949 email

- Malaysia+603 9212 4206 email

- Philippines+63 28548 8254 email

- Singapore+65 6589 8817 email

- Spain+34 675 225 364 email

- Vietnam+84 2444 582 144 email

- UK+44 (20) 3468 1833 email

- US+1 (718) 713 8593 email

Certification

Testimonials

Event Technology