Live AI Transcription Platforms: Real-Time Speech to Text

Live AI Transcription — the conversion of spoken language into text in real time using artificial intelligence — has emerged as a critical technological capability across sectors, including accessibility, media, enterprise communications, and real-time analytics. Rather than processing pre-recorded audio, Live AI Transcription systems ingest streaming audio, perform on-the-fly inference, and deliver text with minimal delay. This article examines the technical foundations, performance metrics, real-world constraints, and prospects of these platforms as of 2026, drawing on peer-reviewed research, engineering evaluations, and performance standards established in current computational linguistics and speech recognition literature.

The Foundations of Live AI Transcription

1. What Defines Real-Time Speech-to-Text

At its core, Live AI Transcription relies on Automatic Speech Recognition (ASR) models optimized for streaming input, rather than batch processing. Traditional speech recognition has historically operated on complete audio files: processing audio after capture, with no requirement for immediate usability. In contrast, real-time systems must fragment continuous speech into segments short enough to process swiftly — a non-trivial challenge because abrupt segmentation can degrade recognition quality. Researchers have examined different fragmentation strategies, including fixed interval splitting and voice activity detection (VAD), to balance latency against accuracy. Experimental results show that VAD-based approaches yield higher transcription quality at the cost of latency, while fixed intervals produce lower latency but reduced accuracy, underscoring foundational trade-offs in live transcription systems.Assessing Latency in ASR Systems: A Methodological Perspective for Real-Time Use

2. End-to-End ASR Architectures

Modern live transcription tends to employ end-to-end (E2E) neural architectures that unify acoustic, pronunciation, and language modeling components into a single model. These systems eschew classical ASR pipelines — which separate feature extraction, acoustic modeling, and decoding stages — in favor of learned internal representations. Transformer-based models and attention mechanisms, especially when adapted for low-latency inference, have shown particular promise in live transcription tasks. Research prototypes such as Moonshine exemplify this trend by optimizing transformer inference for real-time speech input and achieving computational efficiency without sacrificing word-error-rate (WER) performance.Moonshine: Speech Recognition for Live Transcription and Voice Commands

Technical Performance Metrics

1. Latency

Latency — the delay between speech capture and text output — is the principal metric distinguishing effective Live AI Transcription services from batch or near-real-time systems. Ideal real-time performance strives to produce partial transcript hypotheses within a few hundred milliseconds and final, polished text in under one second. In high-stakes applications such as live captioning or interactive conversational agents, delays above roughly 500 milliseconds can disrupt cognitive flow and impede usability.

Benchmarks in recent engineering research validate that well-designed real-time systems can maintain end-to-end processing times under 1.1 seconds while delivering accurate text output in a variety of noise and accent conditions. Furthermore, on-device real-time engines (e.g., those optimized for edge deployment) demonstrate the feasibility of ultra-low latency even in hardware-constrained environments, challenging assumptions that cloud computing is always necessary.Real-time Transcription Benchmark

2. Accuracy

Accuracy in speech recognition is typically measured via Word Error Rate (WER) — the proportion of insertions, deletions, and substitutions relative to ground truth text. Emerging systems targeting real-time deployment have reported WERs below 5% in controlled conditions, demonstrating near-human rates when audio quality is high and there is minimal background noise. However, independent benchmarking highlights substantial performance variability across different environments and speakers, particularly for streaming ASR services under real-world conditions. These studies show that real-time accuracy often lags behind offline transcription, especially when audio contains overlapping voices, noise, or domain-specific vocabulary.Measuring the Accuracy of Automatic Speech Recognition Solutions

In broader market statistics, leading AI transcription engines cited in industry surveys achieve accuracy metrics approaching 99% in ideal settings, but average platforms lag significantly lower, sometimes near 62% in uncontrolled environments. This disparity underscores the importance of choosing systems that balance latency with robust model training and noise suppression capabilities.

3. Language and Context Sensitivity

Beyond raw word matching, high-quality Live AI Transcription integrates Natural Language Processing (NLP) to improve contextual interpretation, correct orthographic errors, and handle industry-specific terminology. While pure acoustic models focus on pattern recognition, hybrid systems combining ASR with NLP post-processing can significantly improve readability and semantic accuracy. Research shows that incorporating token-level NLP correction mechanisms enhances transcription quality, especially in edge systems designed for interactive applications.

Architectural Choices: Cloud vs On-Device

1. Cloud-Based Transcription Services

Cloud solutions remain dominant for Live AI Transcription due to their ability to leverage scalable compute resources and continually updated models. These services stream audio to powerful remote servers, process speech with large neural networks, and return transcriptions to the client. Cloud-based platforms excel in language coverage and model complexity, but they are inherently subject to network latency and privacy concerns.

Despite these limitations, hybrid architectures attempt to mitigate latency risks by combining local pre-processing with cloud-side refinement, reducing round-trip overhead while maintaining high accuracy.

2. On-Device and Edge Inference

Recent research has demonstrated that on-device human transcription — where the entire speech-to-text pipeline runs locally — can achieve both low latency and strong transcription quality, without reliance on cloud connectivity. These architectures typically use lightweight models and modules optimized for embedded inference and may include browser-based audio capture with WebSockets for efficient streaming.

On-device transcription provides clear advantages in privacy-sensitive domains (e.g., clinical documentation, legal settings) because audio data remains on the local device. It also supports operation in bandwidth-limited environments. However, the computational constraints of mobile or edge hardware can limit model size, with trade-offs in vocabulary breadth and context understanding.

Use Cases and Applications

1. Accessibility

Live AI Transcription has become indispensable for accessibility, especially in providing real-time captions for individuals who are deaf or hard of hearing. With improved models and faster inference, live captioning platforms can offer near-instant text alongside spoken content, significantly enhancing participation in educational settings, conferences, and public events.

2. Media and Broadcasting

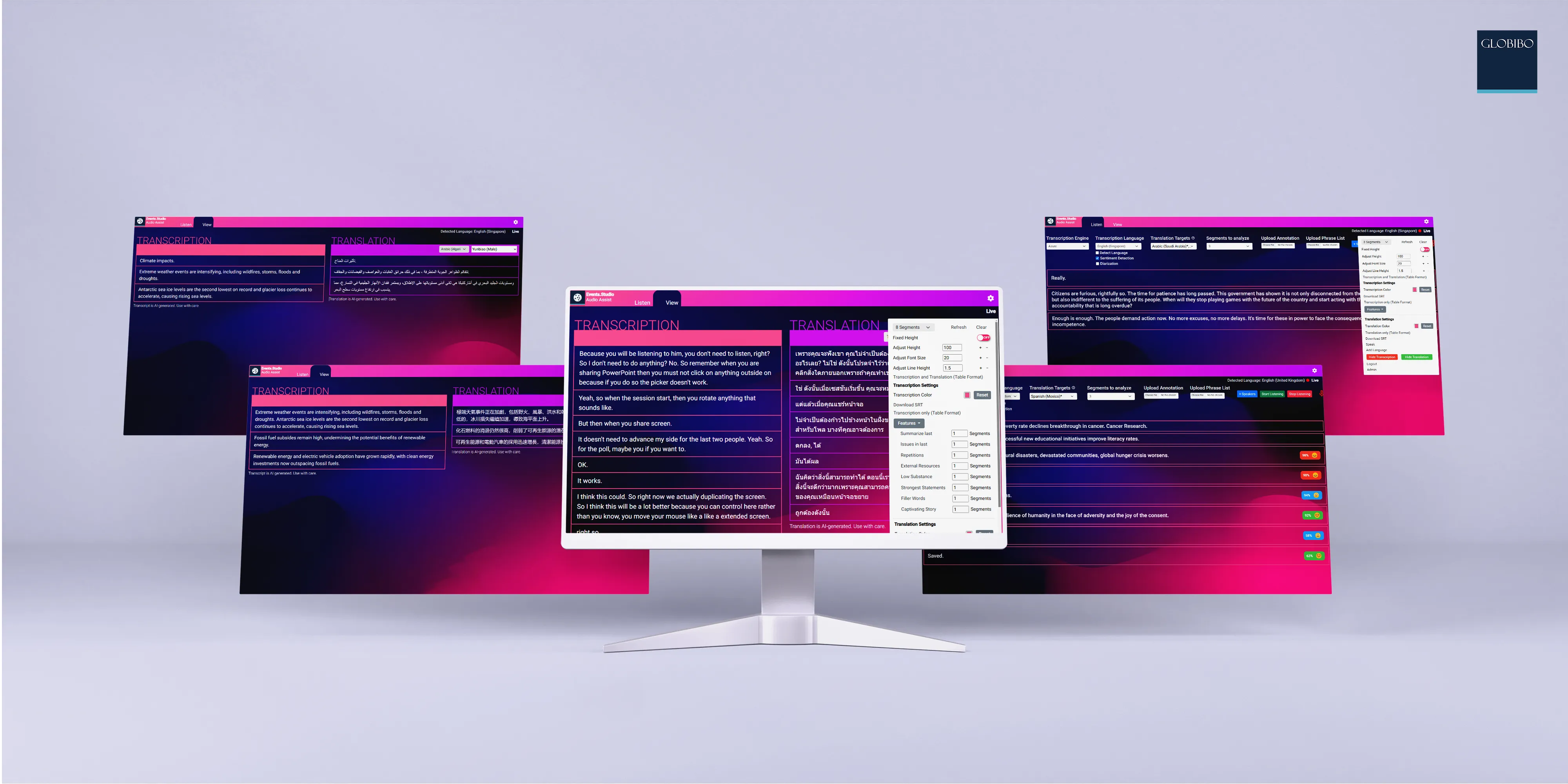

In live television, streaming, and broadcast scenarios, real-time captioning and translation services enable compliance with accessibility standards and enhance audience engagement. Recent deployments of dual-stream translation and captioning demonstrate how Live AI Transcription for conferences can support multilingual output live on screen.

3. Enterprise Communications and Analytics

Live AI Transcription systems are increasingly integrated into enterprise workflows, powering meeting transcripts, compliance logging, and real-time analytics dashboards. Low-latency transcription feeds into automatic summarization, agent assist tools, and live dashboards that depend on instantaneous text for decision-making.

4. Healthcare Documentation

AI-based speech recognition is transforming clinical documentation by reducing the administrative burden on clinicians. Systematic reviews indicate that ASR systems improve documentation efficiency, although performance varies by clinical context and model design — highlighting the need for careful validation in mission-critical environments.

Challenges and Limitations

1. Noise and Acoustic Variability

Real-world audio conditions introduce noise, overlapping speakers, and variable microphone quality, which remain among the most challenging issues for Live AI Transcription. Accuracy degrades significantly under these conditions unless advanced noise suppression, speaker separation, and domain adaptation techniques are employed.

2. Accent, Dialects, and Bias

Speech models often exhibit performance disparities across accents and dialects due to the imbalanced nature of their training data. This can lead to higher error rates for underrepresented speech patterns, raising fairness and inclusion concerns.

3. Ethical and Privacy Concerns

Live transcription captures sensitive personal and professional speech content in real time. In cloud-based architectures, data transmission and storage introduce privacy risks unless encrypted and managed in compliance with regulatory frameworks. On-device alternatives reduce some risks but may face other security constraints depending on local access controls. Speech-to-Text Technology in 2025: The Next Frontier of Accessibility and Productivity

Future Directions

1. Model Adaptation and Customization

Emerging research emphasizes adaptive models that tune transcription behavior for specific domains or speaker populations, improving accuracy in specialized contexts such as legal proceedings or scientific presentations. Supporting dynamic vocabulary updates and contextual awareness will push Live AI Transcription toward more reliable and personalized performance.

2. Multilingual Real-Time Transcription

Progress in multilingual ASR models promises real-time transcription across dozens of languages with native-level accuracy. Advances in cross-lingual training and self-supervised learning are key to scaling real-time ASR beyond dominant global languages.

3. Integration With Conversational AI

Hybrid systems that combine Live AI Transcription with real-time conversational AI agents promise tightly integrated voice interfaces where speech is transcribed, understood, and responded to on the fly. These capabilities will enable more natural human-machine dialogue across voice assistants, robotics, and interactive applications.

Summary of Live AI Transcription Platforms

Live AI Transcription has matured from niche research into a core enabling technology for accessibility, communications, and real-time analytics. With advances in end-to-end ASR models, ultra-low latency inference, and hybrid NLP-enhanced pipelines, the state of real-time speech-to-text is delivering near-instant and high-accuracy output even under challenging conditions. Nonetheless, overcoming noise, accent variability, and privacy concerns will remain paramount as the technology evolves toward increasingly ubiquitous deployment. Continued research, thoughtful engineering, and ethical stewardship will shape the next generation of Live AI Transcription platforms.

Rick Lee

Project Manager – Event Technology

With over 10 years of experience in event technology, Rick is an expert in integrating cutting-edge tech solutions for seamless event execution. His expertise includes audio-visual setups, interactive displays, and live-streaming technologies. Rick’s innovative approach ensures every event is technologically advanced and highly engaging.

YouTube Video on Live AI Transcription Platforms

Contacts

- Australia+61 28317 3495 email

- China+ 86 10 87833258 email

- France+33 6 1302 2599 email

- Germany+49 (030) 8093 5151 email

- Hong Kong+852 5801 9962 email

- India+91 (11) 7127 9949 email

- Malaysia+603 9212 4206 email

- Philippines+63 28548 8254 email

- Singapore+65 6589 8817 email

- Spain+34 675 225 364 email

- Vietnam+84 2444 582 144 email

- UK+44 (20) 3468 1833 email

- US+1 (718) 713 8593 email

Certification

Testimonials

Event Technology